If you were born in the 90s or before, you might have heard about the Y2K millennium bug which rattled the whole computer industry. Before we jump into this bug, let’s take a quick look into the history of programming and computer storage.

Timeline of popular programming languages

In the 1950s, FORTRAN, COBOL, and many programming languages were created. After this, a lot of high-level programming languages were created, and the computer software industry started to grow faster.

| FORTRAN | 1954-57 | Numeric |

| ALGOL 60 | 1958-60 | Numeric |

| COBOL | 1959-60 | Business |

| APL | 1956-60 | Vector / Matrix math |

| LISP | 1956-62 | Symbols |

| SNOBOL4 | 1962-66 | Strings |

| PL/1 | 1963-64 | General |

| BASIC | 1964 | Educational |

| PASCAL | 1971 | Educational |

| PROLOG | 1972 | AI/Logic with rules |

| C | 1972 | General |

| SCHEME | 1975 | Educational |

| ADA | 1979 | General |

| Smalltalk | 1971-80 | Applications/Objects |

| C++ | 1982-86 | General/Objects |

| CLOS | 1983-84 | LISP/Objects |

| PERL | 1987-89 | Scripting |

| JAVA | 1991 | Applets, General/Objects |

| JavaScript | 1995 | Web development |

| PHP | 1995 | Web development |

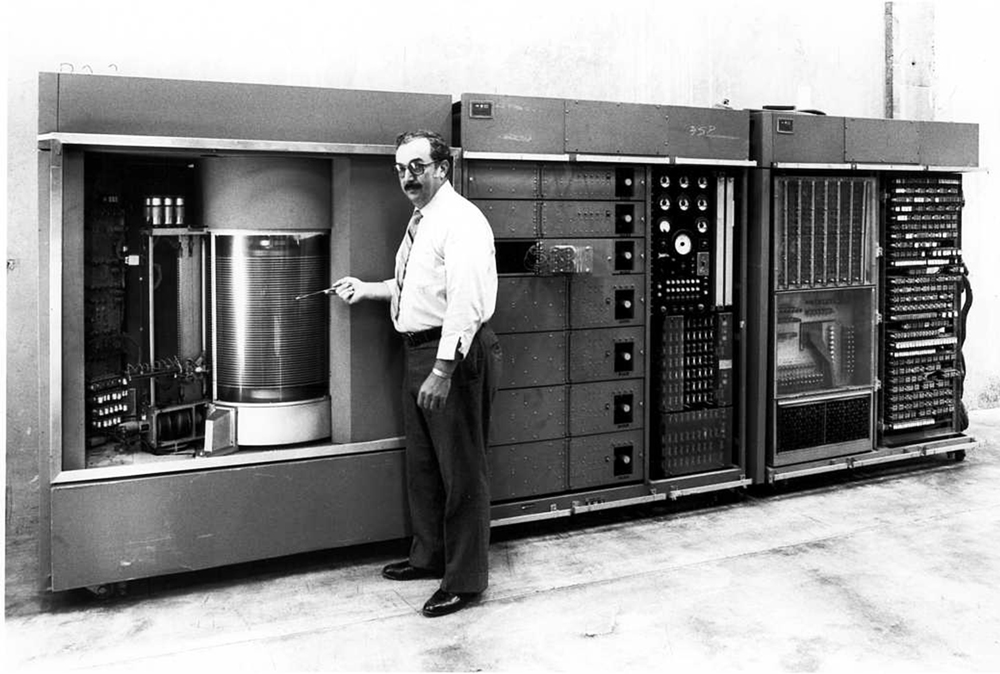

Computer storage

In 1956, IBM introduced IBM 350, the world’s first hard disk drive. Even though this hard disk drive weighed nearly a ton, it’s memory capacity was only 5 megabytes.

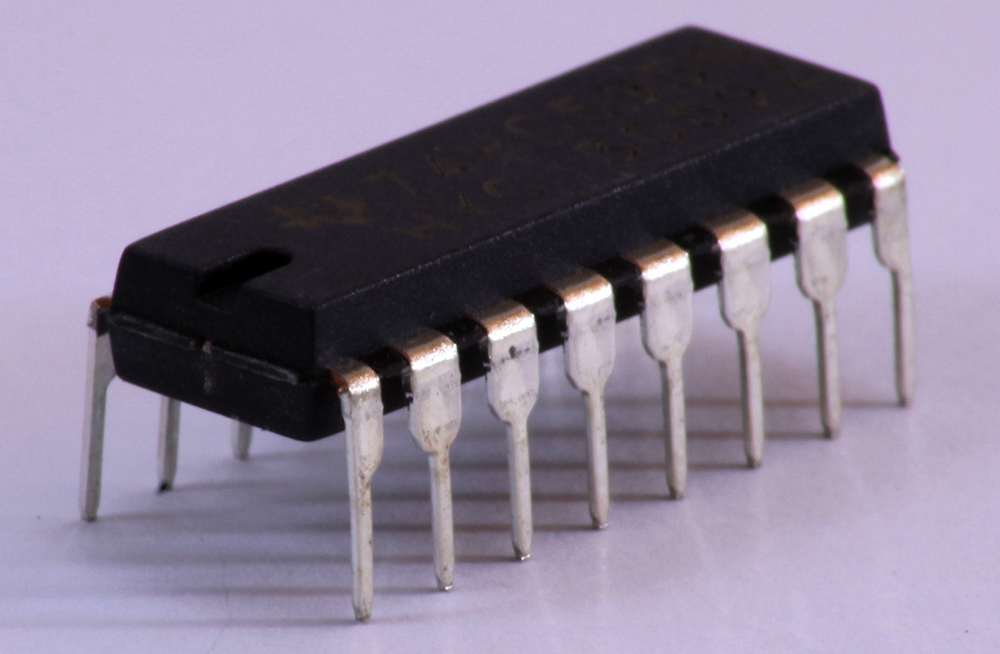

Integrated circuit

In 1947, the world’s first transistor was invented. And, in 1958, an American electrical engineer named Jack Kilby took tiny transistors and integrated them on a germanium (Ge) semiconductor, creating the world’s first integrated circuit. Integrated circuits revolutionized the computer industry. It led to the development of RAM and CPU that we use today.

The Y2K millennium bug

After 1980, it became absolutely necessary to computerize all fields. But, during that time period, the cost of computer storage was still very high. Due to this high cost of storage, software engineers wrote codes with an aim to utilize less memory. Normally, when we write a date, first we will mention the day, then the month, and then the year, in 8 digits format as shown below.

23/10/1991

But in those days, software engineers, in order to save memory, coded the years to be saved as 2 digits instead of 4. Now, in order to save a date on a computer, instead of 8 spots, only 6 digits were required, using less memory.

23/10/91

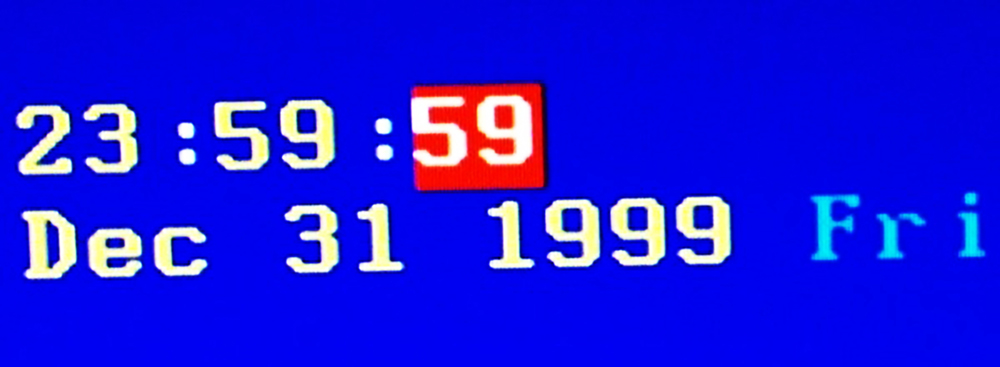

But, as the years get saved in 2 digits, computers saved the year 2000 as 00. But, there was just one problem with this date format.

23/10/00

Computers will assume this 00 as the year 1900. Computers won’t be able to distinguish the years 1900 and 2000. And, the year 2000 was a leap year. This messes up the dates in computers. This is how the Y2K bug got its name.

As a result of this bug, when the year 1999 ends and the year 2000 begins, computers and electronics all over the world were predicted to malfunction. From hospitals to nuclear power plants, all the fields were already computerized by this time. So, everyone feared that this Y2K bug could cause chaos.

What if nuclear missile systems malfunction due to this Y2K bug and cause another world war? To avert this, USA and Russia conducted the Center for the Year 2000 Strategic Stability, a joint operation during the transition (1999 to 2000). Coordinators from over 120 countries established the Y2K Cooperation Center (IY2KCC) and promoted the Y2K awareness worldwide.

The aftermath of the Y2K millennium bug

During the midnight of January 1, 2000, no large-scale problems were reported. But in some places, card swipe machines stopped processing credit and debit card transactions but the services resumed after a while.

In Onagawa nuclear power plant in Japan, an alarm sounded two minutes after midnight. And in Ishikawa nuclear power plant in Japan, a piece of radiation-monitoring equipment failed at midnight. However, officials stated there was no risk to the public.

Similarly, minor technical malfunctions were reported all over the world. To overcome this Y2K bug, all over the world, $300 – $858 billion dollars have been spent in the computer industry as per various reports.

Y2K make people realize how important computers are in the modern world. Share your thoughts about the Y2K in the comment section below.

References:

1. CNN – U.S./Russian Y2K center to avoid nuclear exchange – March 4, 1999.

2. Y2K bug.

3. History of computers: A brief timeline.

4. The history of computer data storage, in pictures – Pingdom Royal.

5. What a 5MB hard drive looked like in 1956.

6. Y2K repair bill: $100 billion

7. Some key facts and events in Y2K History.